AI in engineering

Rethinking AI in Engineering: physics-inspired machine learning

Artificial intelligence (AI) is increasingly discussed across engineering and technology. As new tools and methods emerge, AI is often presented as a way to accelerate workflows and expand design capabilities.

At the same time, many engineers approach these claims with caution. In deterministic engineering disciplines, such as computational electromagnetics, methods must be physically grounded, reproducible, and transparent. Probabilistic outputs or opaque decision-making are not simply inconvenient; they undermine trust in the results.

It is therefore not surprising that, for many engineers, the word AI often evokes scepticism rather than excitement. As industry experts have pointed out, including recent discussions with senior engineers in the field, scepticism remains high when AI is framed as a replacement for physics-based methods.

However, this conclusion often stems from a narrow interpretation of what AI means in an engineering context.

There is a different, and increasingly important, class of methods that does not aim to replace physics, numerical solvers, or engineering judgement. Instead, it treats machine learning as a mathematical tool, designed to work with physics rather than around it. This is the domain of physics-inspired machine learning, and it represents a fundamentally different approach to using AI in engineering.

Physics-inspired AI vs traditional machine learning in engineering

Traditional machine-learning approaches are typically data-driven. Models are trained to identify patterns in large datasets and to make predictions based purely on statistical correlations. In many applications, such as image recognition, natural language processing, or recommendation systems, this approach is entirely appropriate. Accuracy is measured statistically, and occasional errors are acceptable.

Engineering simulation is different.

In high-fidelity modelling, system behaviour is governed by known physical laws, often expressed as partial differential equations. Accuracy is not defined statistically, but physically: does the result satisfy Maxwell’s equations, boundary conditions, and conservation laws? Can it be trusted across the full range of relevant operating conditions?

Physics-inspired machine learning starts from this premise. Rather than learning only from data, these models are explicitly constrained by physical knowledge. This can take several forms, for example:

- Embedding physical laws directly into the training process

- Structuring models to respect known symmetries or conservation properties

- Combining surrogate models directly with trusted numerical solvers

The key distinction is that physics is not optional. It is not something the model might “discover” if enough data is provided; it is an explicit part of the formulation. The role of machine learning is not to invent new physics, but to accelerate expensive computations in a way that remains consistent with established theory.

This shift from “AI as a generic predictor” to machine learning as a physics-aware computational tool is central to understanding why these methods are gaining traction in engineering environments, where accuracy and determinism are non-negotiable.

Physics-based AI emerges at the intersection of high-performance computing, data-driven machine learning, and physics-based computational engineering. Rather than replacing physical models, it integrates machine learning within established solver frameworks to accelerate computational bottlenecks while preserving physical rigor.

Where physics-inspired AI adds real value and where it does not

The real value of physics-inspired machine learning in engineering is not that it replaces trusted numerical methods, but that it changes how time is spent during the design process. This distinction is especially important in early-stage antenna and electromagnetic design, where limited time often shapes decisions as much as physics itself.

In design workflows that involve large parameter spaces or repeated trade-off studies, a significant portion of effort can be spent on simulation setup and repeated execution, leaving less time for genuine design exploration.

As projects progress, exploration becomes iterative and slow, and engineers may be forced into premature design lock-in to meet deadlines. Fine-tuning and performance optimisation are then compressed into the final stages, when changes are most expensive.

Physics-inspired machine learning addresses this imbalance by targeting early-stage computational bottlenecks, rather than attempting to automate or replace the full design workflow.

Accelerating early-stage exploration

When machine learning is used as a physics-aware surrogate, it enables fast evaluation of viable design variants early in the process. Instead of running a full-wave solver for every candidate design, surrogate models, trained and constrained by physics, can rapidly estimate electromagnetic behaviour across a defined design space.

This allows engineers to:

- Explore more design alternatives in less time

- Gain earlier insight into sensitivities and trade-offs

- Avoid committing too early to suboptimal concepts

The benefit is not speed alone, but better decision-making at a stage where design flexibility is highest.

Exploration without replacing verification

Physics-inspired AI is most effective in early-stage design, where the goal is to understand trade-offs and explore the design space rather than to produce final, certifiable results. At this stage, fast, physically consistent estimates enable broader exploration and reduce the risk of premature design lock-in.

What these methods do not replace is final verification. Detailed analysis, edge cases, and design sign-off remain the responsibility of traditional, first-principles solvers. Physics-inspired AI is therefore not a shortcut around physics, but a way to use it more effectively, accelerating exploration while reserving high-accuracy simulation for where it matters most. A practical example of this approach is presented in our recent paper “Physics-Constrained Neural Networks for Electromagnetic Surrogate Modeling.” The framework introduces compact, physics-compliant surrogate models capable of predicting spherical wave expansion coefficients and scattering parameters in real time, while ensuring Maxwell-consistent behaviour. A case study on a multilayer Ka-band patch antenna demonstrates how such models can accelerate early-stage exploration across a multi-parameter design space without compromising physical consistency

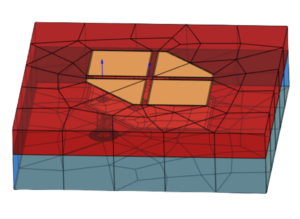

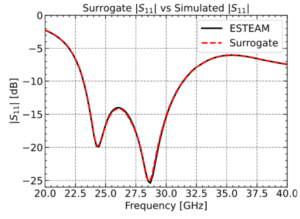

a) Complex slot patch antenna |

b) Comparison of surrogate model predictions and high-fidelity TICRA Tools (ESTEAM) simulations for the exported design. A 40-point frequency sweep (20–40 GHz) was completed in 1 hour 36 minutes using a Standard Laptop. The surrogate closely matches the full-wave results, with a maximum deviation of 0.08 dB, primarily due to the limited resolution of the baseline simulation. Notably, the surrogate model generates each prediction in approximately 1 ms, enabling real-time computation and rapid design evaluation. |

Looking ahead: physics-inspired AI in computational electromagnetics

As computational engineering evolves, the core challenges remain unchanged: increasing model complexity, expanding design spaces, shorter development cycles, and ever-higher demands on accuracy. What physics-inspired AI changes is not the simulations themselves, but how efficiently engineers can work with them.

Its impact is unlikely to be disruptive overnight. Instead, progress will be incremental, driven by careful integration into established workflows. Rather than replacing solvers, these methods extend their reach, making high-fidelity physics available earlier in the design process and enabling faster, more interactive exploration.

A key trend is the emergence of hybrid modelling workflows, where numerical solvers, analytical methods, and machine-learning surrogates coexist. Each plays a distinct role: solvers provide reference accuracy, surrogates deliver speed, and physics ensures consistency and interpretability.

In this context, machine learning becomes less about artificial intelligence and more about computational machine learning, a physics-aware numerical tool aligned with core engineering values such as transparency, validation, and repeatability.

For domains where simulation fidelity is non-negotiable, such as antenna and electromagnetic simulation, this physics-first approach aligns closely with the challenges addressed by TICRA. Here, machine learning is not an alternative to established solvers, but a way to extend their reach by enabling faster, more informed exploration while preserving physical accuracy.

A more detailed technical description of this framework is available in the paper:

Physics-Constrained Neural Networks for Electromagnetic Surrogate Modeling

This interactive example shows how physics-informed machine learning accelerates early-stage antenna design. Instead of replacing the EM solver, a surrogate model—trained on solver data—enables instant feedback on far-field response and S-parameters, allowing engineers to explore more before committing to full-wave validation.

|

Lasse Hjuler ChristiansenHead of AI and Machine Learning Team Lasse leads TICRA’s AI and Machine Learning Team that drives the development of data-driven solutions across the TICRA Tools suite.

|